AD-TECH

- リクルートデータ組織のブログをはじめました。※最新情報はRecruit Data Blogをご覧ください。

- Recruit Data Blogはこちら

I went for running about 10 km this morning in the heavy wind and rain on the second day of San Francisco. Since I saw many local runners in such bad weather condition, I came to believe that running even in the bad condition is the spirit of “bay area”. I truly became a runner of “bay area” in spirit.

By the way, I am attending the Association for the Advancement of Artificial Intelligence (AAAI), this article is about the sessions on the second day. The report for the first day is here.

In this series of articles, I am reporting the sessions in AAAI.

AAAI-17 Invited Talk

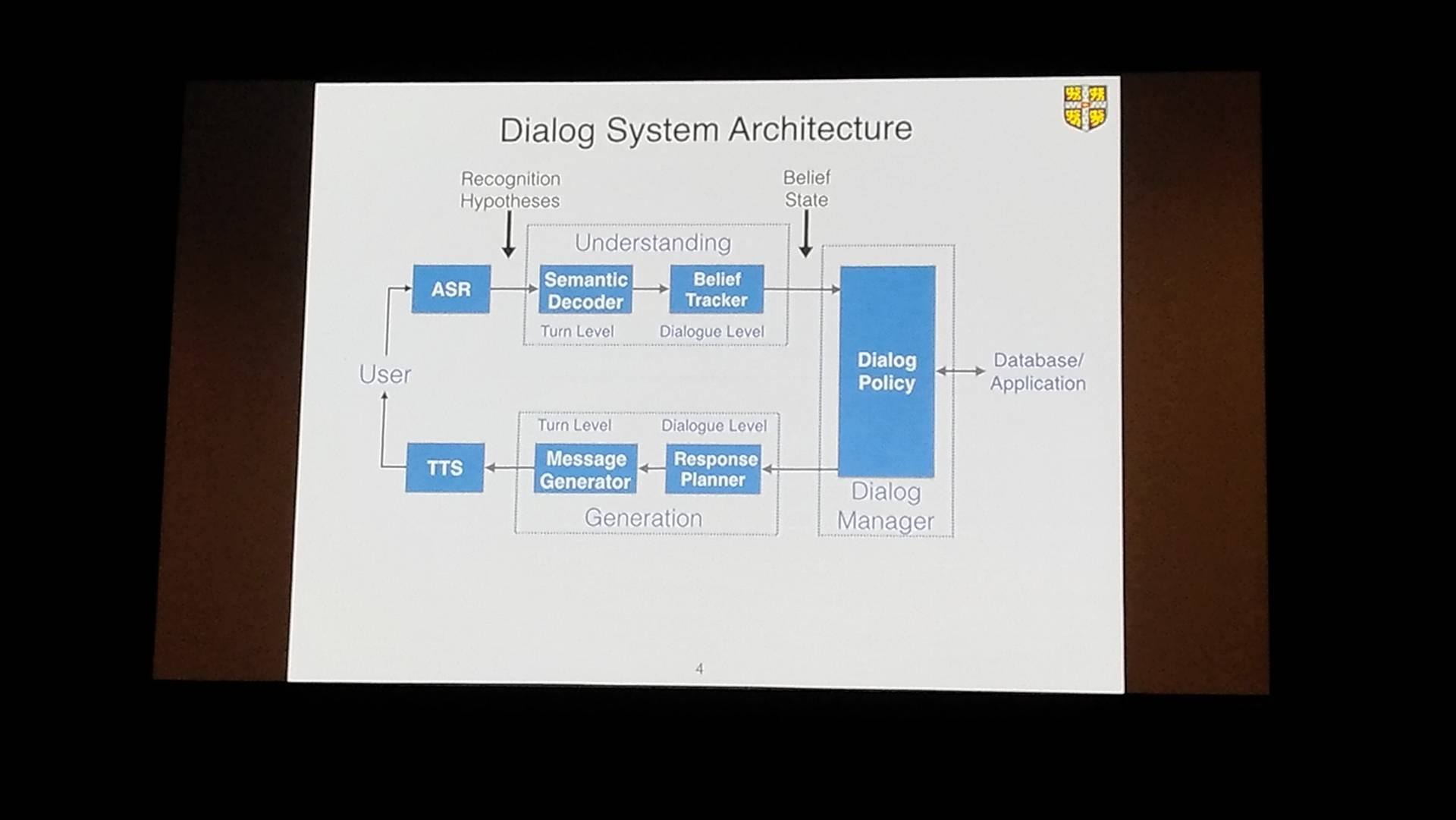

This talk was given by Steve Young at Cambridge University. It covered from the basic introduction about the “dialog system“ to the advanced architecture and the learning method of the model with deep learning (CNN, LSTM).

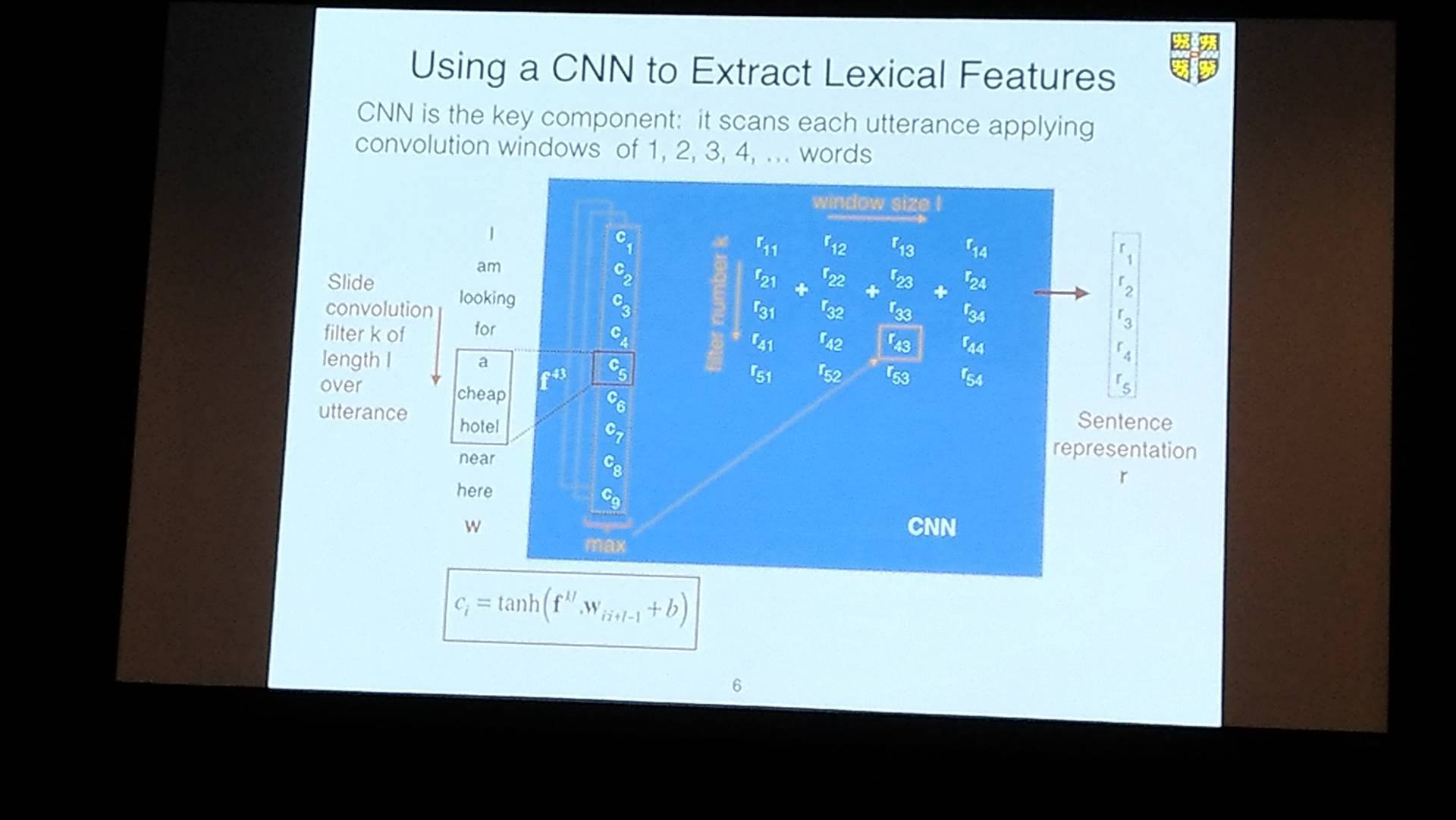

Figure 1:Extraction of lexical features with CNN.

The presentation seemed to be easy for beginners, but unfortunately I could not understand except that creating the lexical feature from the text is useful. Do not ask me about this session any more…

ML8: Feature Construction / Reformulation

The title itself seems to be a 101 basic talk in generating feature, but it was actually very deeper that I expected.

He introduced Random Features which convert the high dimensional data into low dimensional feature assuming the shift-invariant kernel.

He also mentioned the new algorithm which added moment matching to the original one, and the method of noise reduction with the better performance using PCA in frequency space.

The meaning of “Feature Construction” here is the construction of features in frequency space or low dimensional representation rather than “creating feature” in classical prediction.

ML11: Bayesian Learning

Since the movement of the arm of humans or robots can be assumed to be on the hyper-spheare or hyper-torus, he invented the method to estimate the movement with generalized von Mises distribution to the multivariate estimation.

Another talk was about bayesian network which works with the non-random missing value.

I’d like to read “statistical science of survey observation” by Dr. Hoshino again.

ML14: Clustering

I decided to attend this session because my colleague attended the other session. The session was about these topics.

- the method assigning the multiple centroids to a cluster outperforms conventional method [Graph Regularized NMF(Cai et 2011)] in terms of accuracy (Accuracy、Normalized Mutual Information).

- Optimal Neighborhood Kernel Clustering(ONKC) method as an extension of Multiple Kernel K-means (MKKM)

- new evaluation method for clustering ”Clustering Agreement Index(CAI)”

One attendee argued “the proposed method was not sure to converge to the global optimal. There might be some problems in mathematical optimization or in the problem setting.”

I will follow the research about the clustering with centroids.

ML17: Classification and Clustering

As I wanted to make today the day of clustering, I went to see the sessions of clustering. I saw the following topics.

- Matrix decomposition algorithm for the skewed data by using asymmetric loss function.

- Apply the multiple clustering methods to the data and combine them together like ensemble methods.

- combine the cluster with the objective function of linear combination of utility function and the co−association matrix.

- robust SVM with the loss function (with arbitrary Lp norm)

These are very interesting and I hope to use them for my work project.

In particular, since ensemble clustering method seems intriguing, I will look further into it.

AAAI-17 Invited Talk

The talk was presented by Peter Dayan at London University. To sum up, the enforcement learning in AI is similar to the learning mechanics of neurons in brains. He introduce it with some examples, and argued that problem setting, estimation method and environment are all essential to experiments.

Figure 2: The movie about the learning process in which the pigeons learn to poke the light to feed.

I reported on the second day of AAAI.

ARCHIVE

- 月別記事リストを見る

-

- 2020年03月 (2)

- 2019年09月 (3)

- 2019年08月 (1)

- 2019年06月 (1)

- 2019年03月 (5)

- 2019年02月 (3)

- 2018年09月 (1)

- 2018年06月 (2)

- 2018年05月 (2)

- 2018年04月 (1)

- 2018年02月 (1)

- 2018年01月 (1)

- 2017年12月 (2)

- 2017年11月 (1)

- 2017年10月 (1)

- 2017年08月 (1)

- 2017年07月 (2)

- 2017年06月 (1)

- 2017年05月 (3)

- 2017年04月 (5)

- 2017年03月 (12)

- 2017年02月 (16)

- 2017年01月 (1)

- 2016年12月 (1)

- 2016年08月 (1)

- 2016年06月 (5)

- 2016年05月 (2)

- 2016年04月 (1)

- 2016年03月 (3)

- 2016年02月 (8)

- 2016年01月 (3)

- 2015年12月 (2)

- 2015年03月 (1)

- 2015年02月 (2)

- 2015年01月 (3)

- 2014年12月 (2)

- 2014年11月 (3)

- 2014年10月 (1)

- 2014年09月 (2)

- 2014年07月 (1)

- 2014年04月 (2)

- 2014年03月 (3)

- 2013年12月 (1)

- 2013年11月 (1)

- 2013年10月 (5)